Starting at $0.33 per hour the NVIDIA A40 is a mid-range option for users who need VRAM capacity without stepping into premium data center GPUs.

That being said, NVIDIA A40 price varies significantly and is only offered by a few providers.

However, pricing alone doesn't tell the full story. When comparing performance-per-dollar, other GPUs with Ampere architecture can offer substantially better value.

| Provider | NVIDIA A40 Price |

|---|---|

| TensorDock | $0.33/hr |

| RunPod | $0.44/hr |

| Vast.ai | $0.42/hr |

| Crusoe Cloud | $0.90/hr |

| Vultr | $1.71/hr |

Methodology (why you can trust these numbers)

<ul><li>Pricing reflects <strong>public on-demand rates</strong> as of May 2026.</li><li>Only <strong>single-GPU comparable configurations</strong> were included.</li><li>Marketplace pricing reflects <strong>live listings</strong>, which may vary.</li><li>Provider pricing excludes reserved or spot discounts.</li></ul>

Why this matters for developers

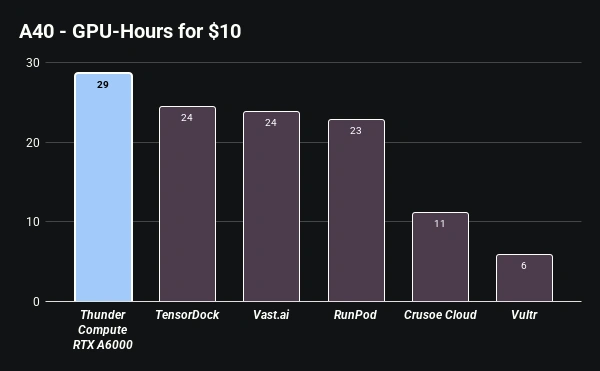

Here's a comparison of running NVIDIA A40 for 10 hours across major cloud providers.

Thunder Compute offers NVIDIA RTX A6000 GPUs for $0.35/hr which boast similar performance to the NVIDIA A60.

Choosing a GPU like the A40 without evaluating alternatives can result in higher costs for the same output, especially when better options exist at similar prices.

Takeaways

<ul><li>The <strong>NVIDIA A40 price</strong> ranges from <strong>$0.33/hr to $1.71/hr</strong> depending on the provider.</li><li>Availability is limited compared to more common GPUs like the A100 or T4.</li><li>Marketplace providers offer lower prices but may have infrastructure tradeoffs.</li><li>Performance per dollar is often <strong>worse than newer or workstation-class alternatives</strong>.</li><li>The A40 is increasingly replaced by GPUs like the L40 and RTX A6000.</li></ul>

See where data center A40 allocation stands amid infrastructure shifts in our AI GPU rental market trends guide.

FAQ

What is the NVIDIA A40 price per hour in 2026?

As of May 2026, the NVIDIA A40 price ranges from $0.33/hr on TensorDock to $1.71/hr on Vultr, depending on the provider and infrastructure.

How does A40 pricing compare to the RTX A6000?

The RTX A6000 is generally more cost-effective, starting at $0.35/hr on Thunder Compute, offering better performance and the same 48GB VRAM for a lower price than the A40.

Is the NVIDIA A40 good for AI training?

While the A40 can handle mid-scale AI training with its 48GB VRAM and NVLink support, GPUs like the A100 or L40 offer significantly higher memory bandwidth and throughput for intensive training tasks.