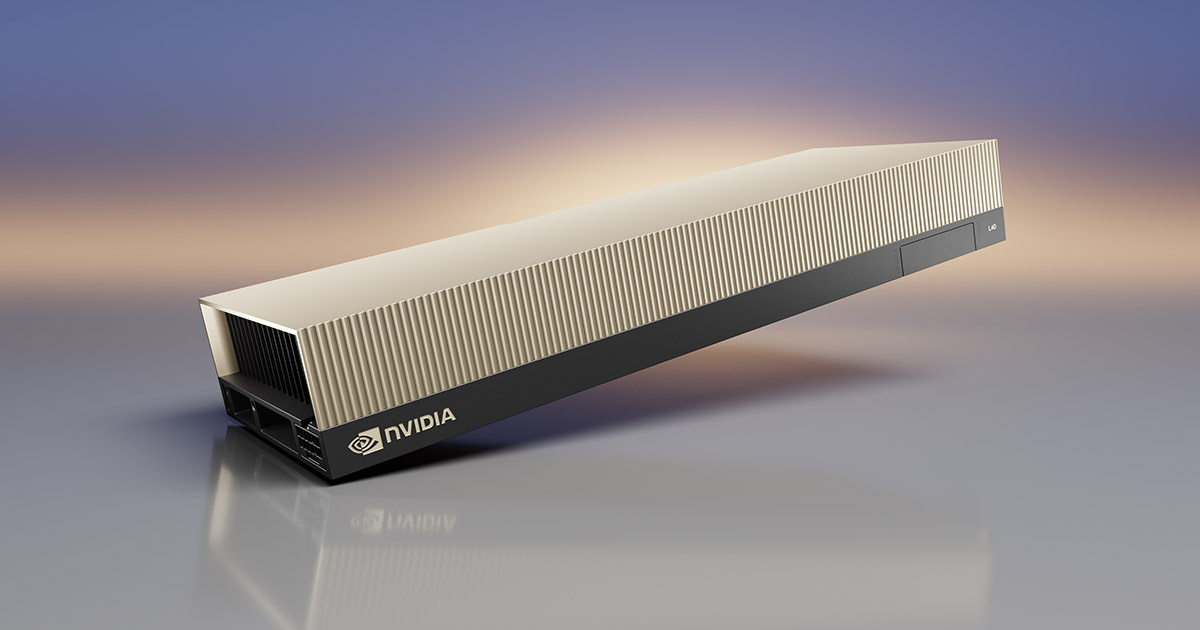

The NVIDIA L40 and L40S are built for high-performance AI inference, machine learning, and simulation workloads. Built on Ada Lovelace architecture, they deliver strong performance.

The NVIDIA L40 price starts at $0.58/hr, while NVIDIA L40S pricing starts at $0.61/hr. Most providers list the L40S, so it is much easier to compare than the base L40.

| Provider | NVIDIA L40 Price | NVIDIA L40S Price |

|---|---|---|

| Vast.ai* | $0.58 | $0.61 |

| Thunder Compute | $0.89 | $0.99 |

| RunPod* | $0.99 | $0.86 |

| Sesterce | $0.99 | N/A |

| Crusoe Cloud | N/A | $1.00 |

| Hyperstack | N/A | $1.00 |

| Lyceum | N/A | $1.05 |

| DigitalOcean | N/A | $1.57 |

| Vultr | N/A | $1.67 |

| Nebius | N/A | $1.82 |

| AWS | N/A | $1.86 (g6e.xlarge) |

| Cerebrium | N/A | $1.95 |

| Modal | N/A | $1.95 |

| CoreWeave | $1.25 (x 8 GPUs) | $2.25 (x 8 GPUs) |

| Oracle Cloud | N/A | $3.50 (BM.GPU.L40S-NC.4) |

| Replicate | N/A | $3.51 |

Methodology (why you can trust these numbers)

<ul><li>Pricing reflects <strong>public on-demand rates and marketplace listings</strong> as of May 2026.</li><li>Only <strong>comparable public listings</strong> were included.</li><li>Where a provider lists <strong>multi-GPU nodes</strong>, the table keeps the provider's published per-GPU-equivalent rate.</li><li>Marketplace pricing reflects <strong>live availability</strong>, which may fluctuate.</li><li>Prices exclude reserved, spot, or long-term discounts.</li></ul>

Takeaways

<ul><li>The <strong>NVIDIA L40 price</strong> ranges from <strong>$0.58/hr to $1.25/hr</strong> in our May 2026 snapshot.</li><li>The <strong>NVIDIA L40S price</strong> ranges from <strong>$0.61/hr to $2.25/hr</strong>.</li><li>Most providers provide only <strong>NVIDIA L40S GPUs</strong>.</li><li>Marketplace providers still offer the lowest starting prices, while premium platforms and hyperscalers sit higher.</li><li>In many cases, <strong>older or alternative GPUs offer better price-performance</strong>.</li></ul>

To optimize your budget and discover which platforms offer the best value, explore our complete comparison of the cheapest cloud GPU providers.

FAQ

What's the cost of renting NVIDIA L40 GPUs?

The NVIDIA L40 price starts at $0.48/hr on providers like TensorDock, though costs can scale up to $3.51/hr depending on the cloud provider and instance configuration.

What's the cost of renting NVIDIA L40S GPUs?

The NVIDIA L40S are available from $0.58-$2.25/hr in May 2026, depending on the provider and deployment model.

Does the NVIDIA L40 support NVLink?

No, the NVIDIA L40 does not support NVLink. This lack of a high-speed GPU-to-GPU interconnect makes it less suitable for large distributed training jobs compared to GPUs like the A100.