The NVIDIA RTX A6000 provides great performance-to-cost ratio for GPU computing workflows. With its massive 48GB of VRAM capacity and 10K+ Cuda Cores count, it's a great alternative for engineers who need processing capacity without the overhead of enterprise hardware.

In this guide, we break down the market-wide pricing for the NVIDIA RTX A6000 to help you decide where to host your next workload.

Takeaways

<ul><li>The RTX A6000 is one of the best <strong>price-to-performance GPUs</strong> available in the cloud</li><li>It offers <strong>48GB VRAM</strong>, making it viable for many AI workloads</li><li>Pricing varies significantly depending on provider type </li><li>Thunder Compute provides the most cost-efficient option for on-demand usage</li></ul>

NVIDIA RTX A6000 Pricing Comparison (On-Demand)

Finding a cost-effective and reliable NVIDIA RTX A6000 is difficult; many legacy providers have stopped listing it in favor of more expensive alternatives.

Below is a comparison of what top cloud providers are charging per GPU-hour as of May 2026.

| Provider | RTX A6000 ($/hr) | Notes |

|---|---|---|

| Thunder Compute | $0.35 | Lowest on-demand price for secure, dedicated instances. |

| TensorDock | $0.45 | Marketplace-style deployment. |

| Vast.ai** | $0.41 | Utilizes a crowdsourced infrastructure where prices fluctuate based on individual host reliability. |

| Sesterce | $0.49 | Budget-focused GPU cloud with public on-demand pricing. |

| Verda | $0.49 | Low-cost GPU cloud provider. |

| Hyperstack | $0.50 | Enterprise-grade cloud provider. |

| RunPod* | $0.77 | Offers a choice between lower-cost "Community Cloud" and higher-priced "Secure Cloud" instances. |

| JarvisLabs | $0.79 | Pay-as-you-go GPU instances for ML workloads. |

| Azure | N/A | No standalone RTX A6000 price is currently listed in our May 2026 reference data. |

| Lambda | N/A | No standalone RTX A6000 price is currently listed in our May 2026 reference data. |

| Coreweave | $1.28 | Focused on large-scale rendering and ML. |

| Paperspace | $1.89 | Public cloud platform. |

| * Prices reflect on-demand hourly rates. Actual costs may vary based on region and availability. Last update: May 12, 2026. |

||

Note: Major providers like AWS, Google Cloud, and Oracle Cloud do not currently list on-demand NVIDIA RTX A6000.

Methodology (Why You Can Trust These Numbers)

To provide a transparent look at the NVIDIA RTX A6000 price landscape, we conducted a comprehensive audit of the top GPU cloud providers.

Our comparison follows a strict set of criteria to ensure an "apples-to-apples" evaluation:

<ul><li><strong>On-demand only:</strong> We do not include reserved-instance, long-term commitment, or prepaid discounts in these figures.</li><li><strong>Same class of silicon:</strong> All prices refer specifically to NVIDIA RTX A6000 GPUs.</li><li><strong>Public price lists:</strong> Every figure comes from the provider's current pricing page or public documentation as of May 2026.</li><li><strong>US pricing:</strong> All rates are listed in USD. Rates in other global regions can differ by 5–20%.</li></ul>

Real-World RTX A6000 Price Impact

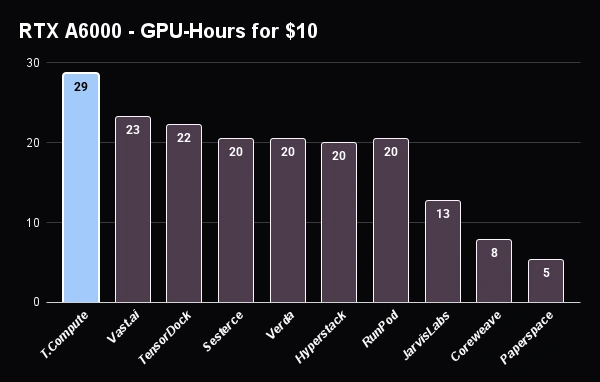

To show the true value of the RTX A6000 price on Thunder Compute, we’ve calculated how much a $10 budget gets you across providers.

Thunder Compute offers NVIDIA A100 GPUs for $0.78/hr; similar price for superior performance.

Why this matters for developers

The RTX A6000 sits in a unique position in the GPU market: significantly cheaper than data center GPUs like A100 or H100, while still offering enough VRAM for meaningful AI workloads.

For developers, this means:

<ul><li>You can fine-tune mid-sized models without paying enterprise GPU premiums</li><li>You can scale horizontally using multiple cheaper GPUs instead of a single expensive one</li><li>You can validate ideas before committing to higher-end infrastructure</li></ul>

Compare top platforms and find the lowest prices in our comprehensive guide to the cheapest cloud GPU providers.

FAQ

What is the NVIDIA RTX A6000?

The NVIDIA RTX A6000 is a professional-grade workstation GPU with Ampere architecture, featuring 48 GB of GDDR6 ECC memory and 10,752 CUDA cores for AI and professional visualization.

What is the NVIDIA RTX A6000 used for?

The RTX A6000 is used for AI and Machine Learning workloads (LLM inference and fine-tuning), professional 3D rendering, high-resolution video editing, and scientific simulations.

Why is the NVIDIA RTX A6000 so expensive?

The high cost is due to its 48 GB of specialized ECC memory and enterprise-grade drivers that are specifically certified for professional software stability.

Are these RTX A6000 prices on-demand or reserved?

All RTX A6000 prices in this guide are on-demand rates with no reserved commitments or prepaid discounts, based on May 2026 price lists.