Use Thunder Compute from your own OpenClaw instance to discover GPUs, launch GPU instances, run commands, create snapshots, tear instances down, and report cost from chat.Documentation Index

Fetch the complete documentation index at: https://www.thundercompute.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Public beta This integration creates real Thunder Compute resources. Start with the smoke test below, confirm teardown, and check cost reporting before using it for longer workloads.

What You Install

The beta integration has two parts:- Thunder Compute skill: teaches the agent how to work with Thunder Compute safely.

- Thunder Compute plugin bridge: exposes native OpenClaw

tc_*tools that call the Thunder Compute MCP server.

What You Can Ask For

After setup, you can ask OpenClaw to:- list live GPU availability

- compare GPU pricing

- list available templates

- spin up an A100 or H100

- run

nvidia-smior your own shell command - create a snapshot when you ask for one

- delete the instance

- report the current invoice impact

Prerequisites

Before you begin, make sure you have:- OpenClaw installed and working

- a model provider configured in OpenClaw

- Node.js and npm available

- a Thunder Compute account

- browser access for Thunder Compute OAuth

- the Thunder Compute OpenClaw beta plugin bundle

tnr CLI.

Recommended OpenClaw version:

Install The Plugin

Clone the official Thunder Compute OpenClaw plugin repository, then enter the plugin directory:openclaw.plugin.json, package.json, and index.ts.

Important Usetools.alsoAllow, nottools.allow.tools.alsoAllowadds Thunder Compute tools without replacing your existing tool configuration.

Install Or Verify The Skill

The beta plugin bundle includes the Thunder Compute skill under:skills/thunder-compute/SKILL.md. That gives users one clone that includes both the executable plugin and the behavior instructions.

Verify The Plugin Loaded

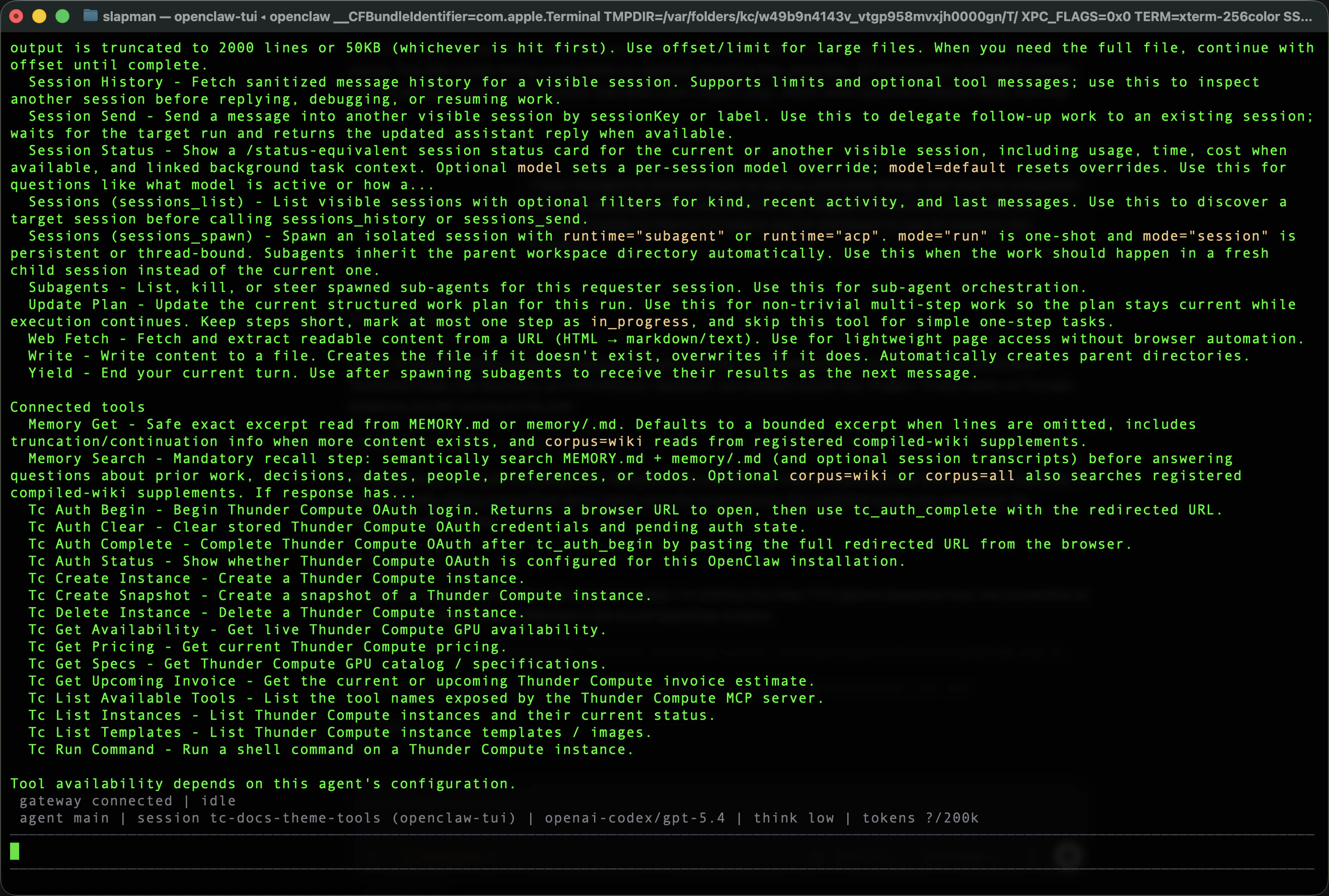

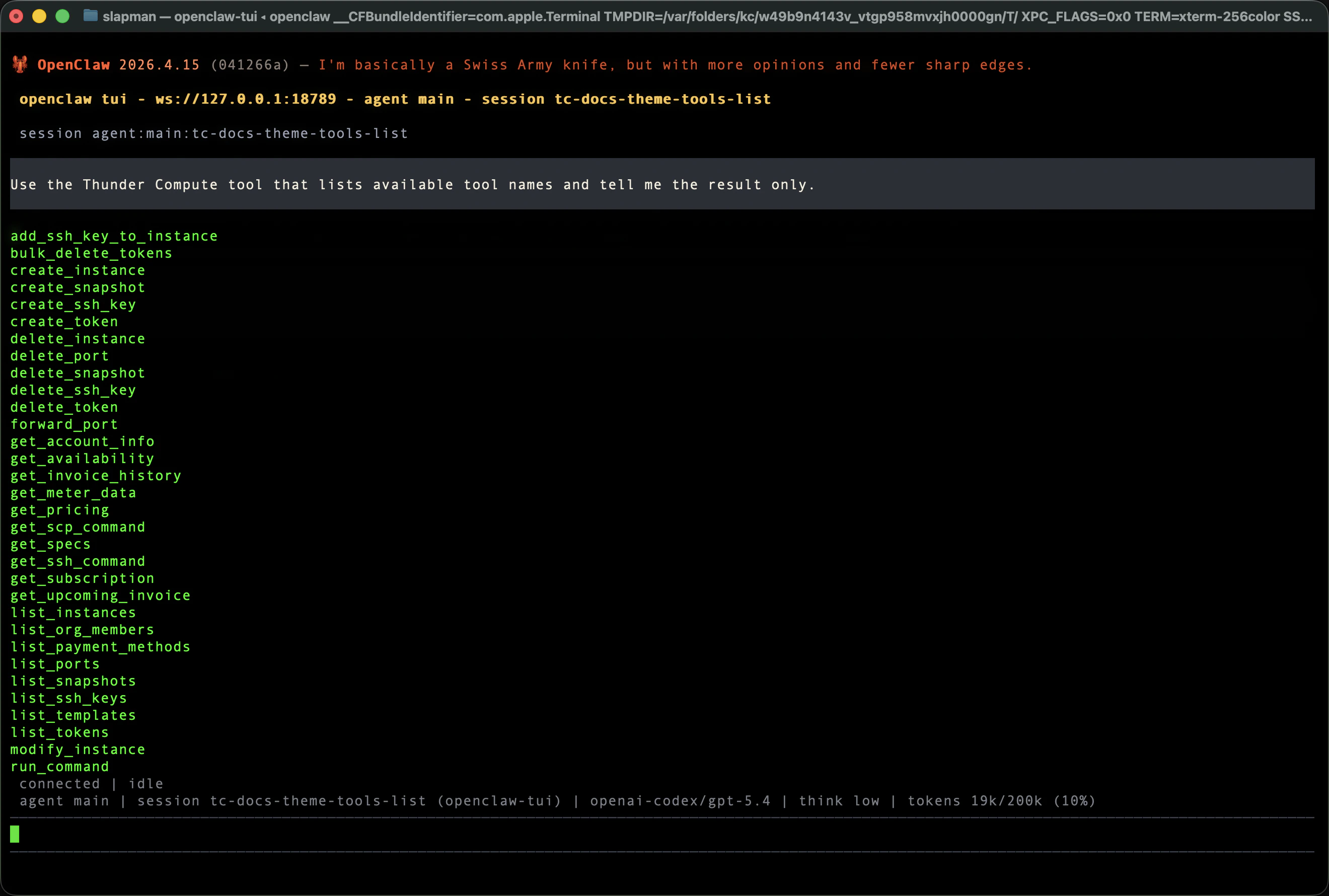

Run:tc_auth_statustc_auth_begintc_auth_completetc_auth_cleartc_list_available_toolstc_get_specstc_get_availabilitytc_get_pricingtc_list_templatestc_list_instancestc_create_instancetc_run_commandtc_delete_instancetc_create_snapshottc_get_upcoming_invoice

Authenticate With Thunder Compute

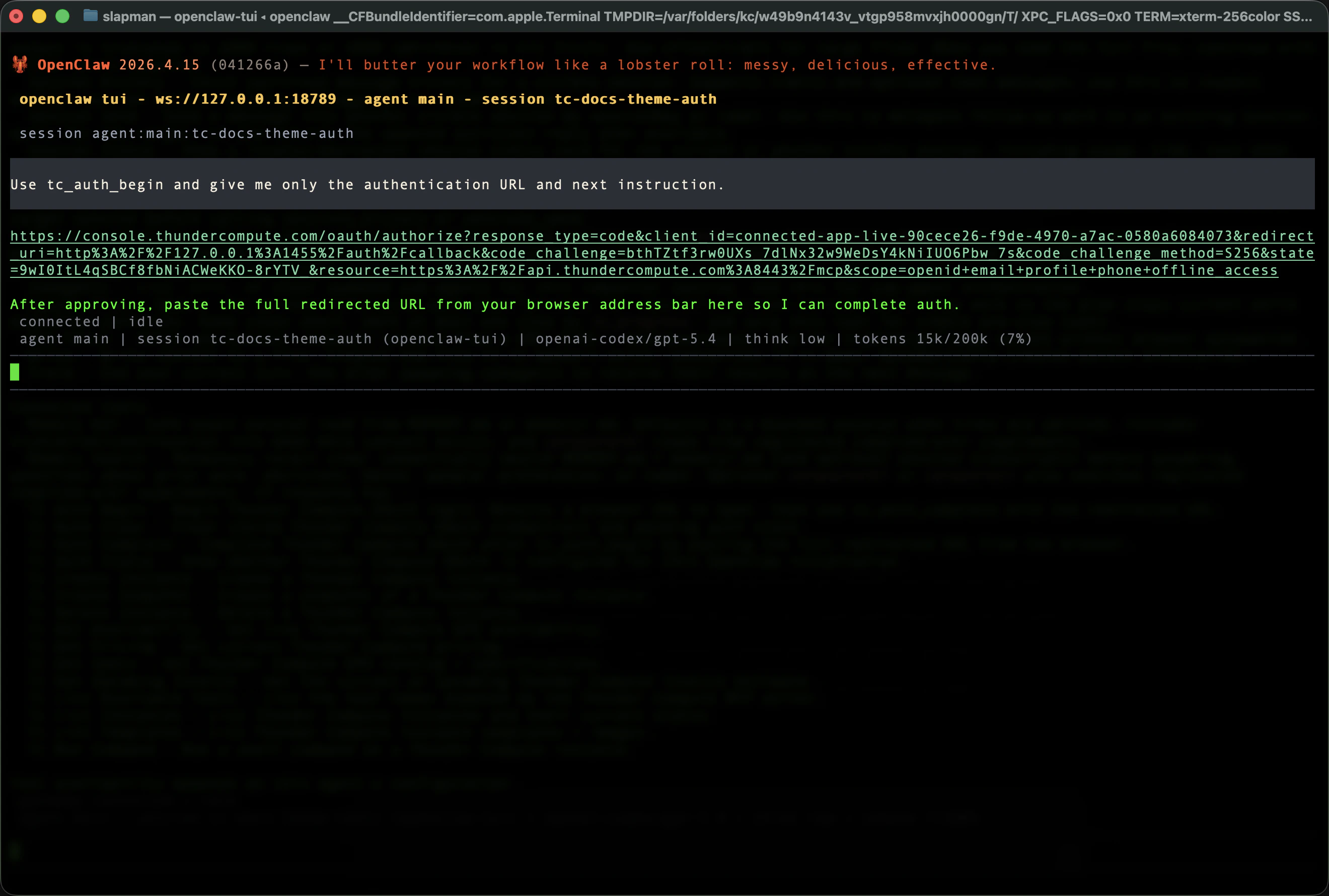

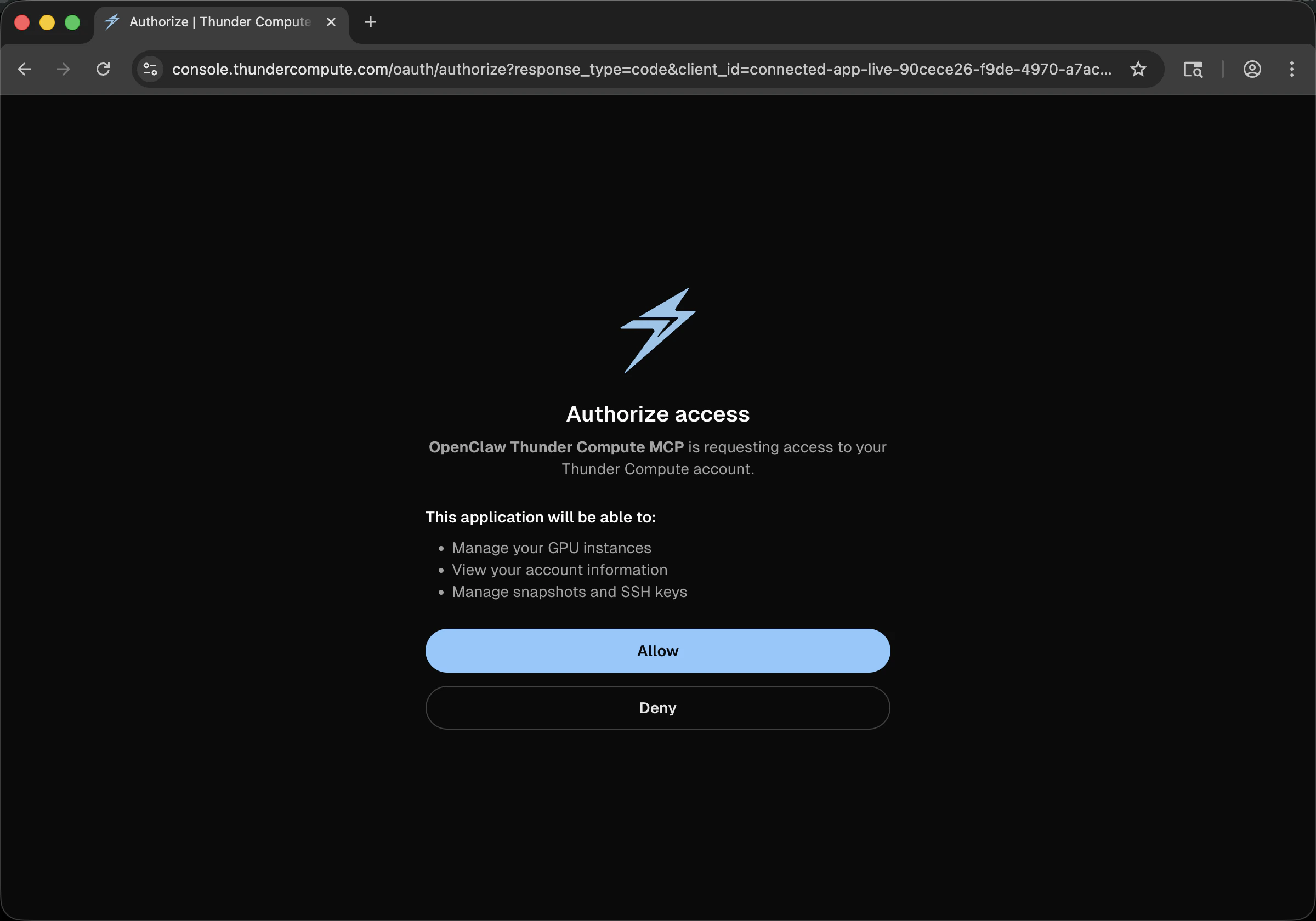

Thunder Compute uses browser-based OAuth. You do not need to paste a static API key into the skill. In OpenClaw, ask:

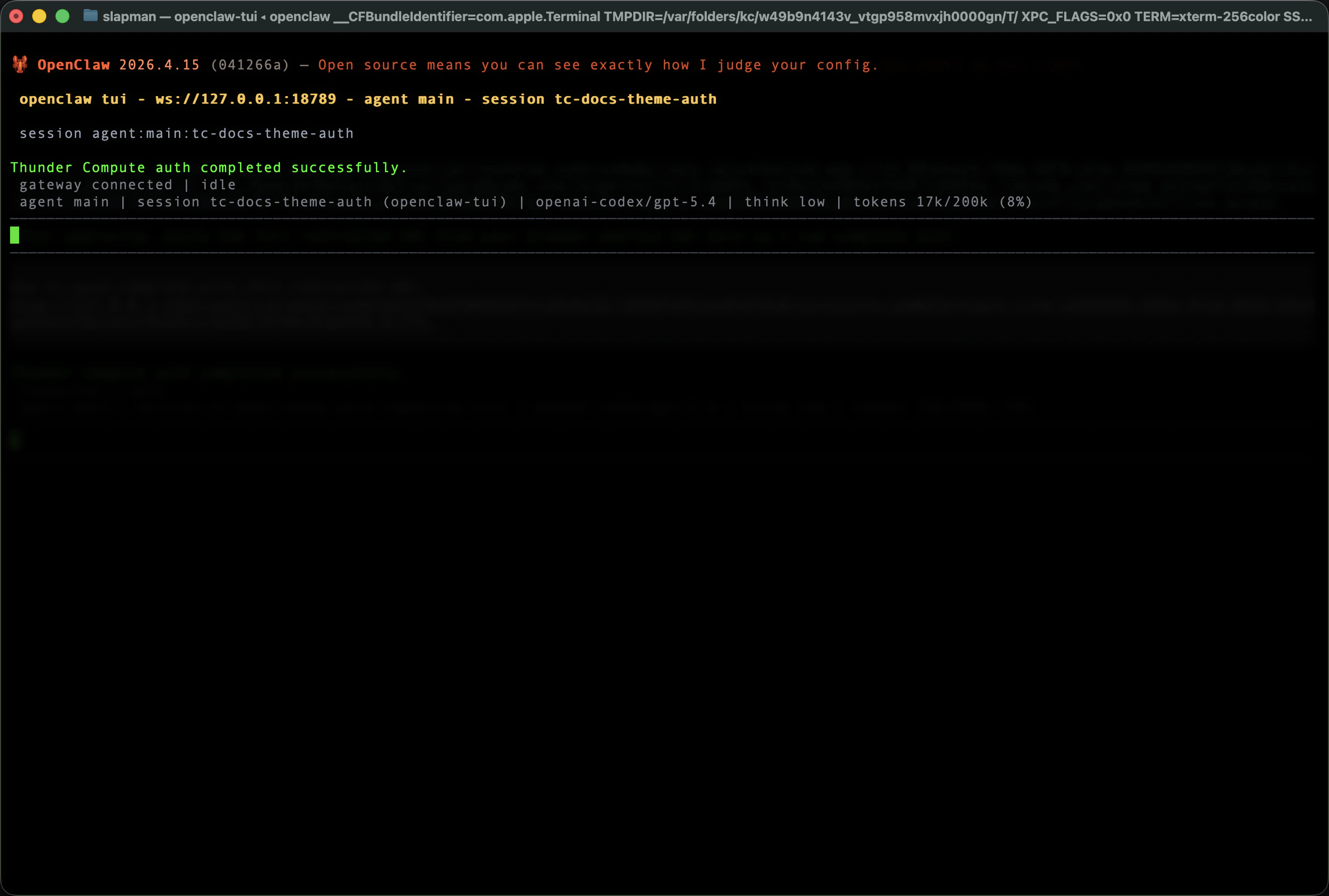

The redirected callback URL contains a short-lived authorization code. Treat it as sensitive until authentication is complete.Thunder Compute access tokens are short-lived. The plugin stores the refresh token from OAuth and refreshes access automatically when possible. To check the current auth state, ask:

tc_auth_begin and tc_auth_complete.

Verify Live Thunder Compute Connectivity

Ask OpenClaw to list the live MCP tool surface:

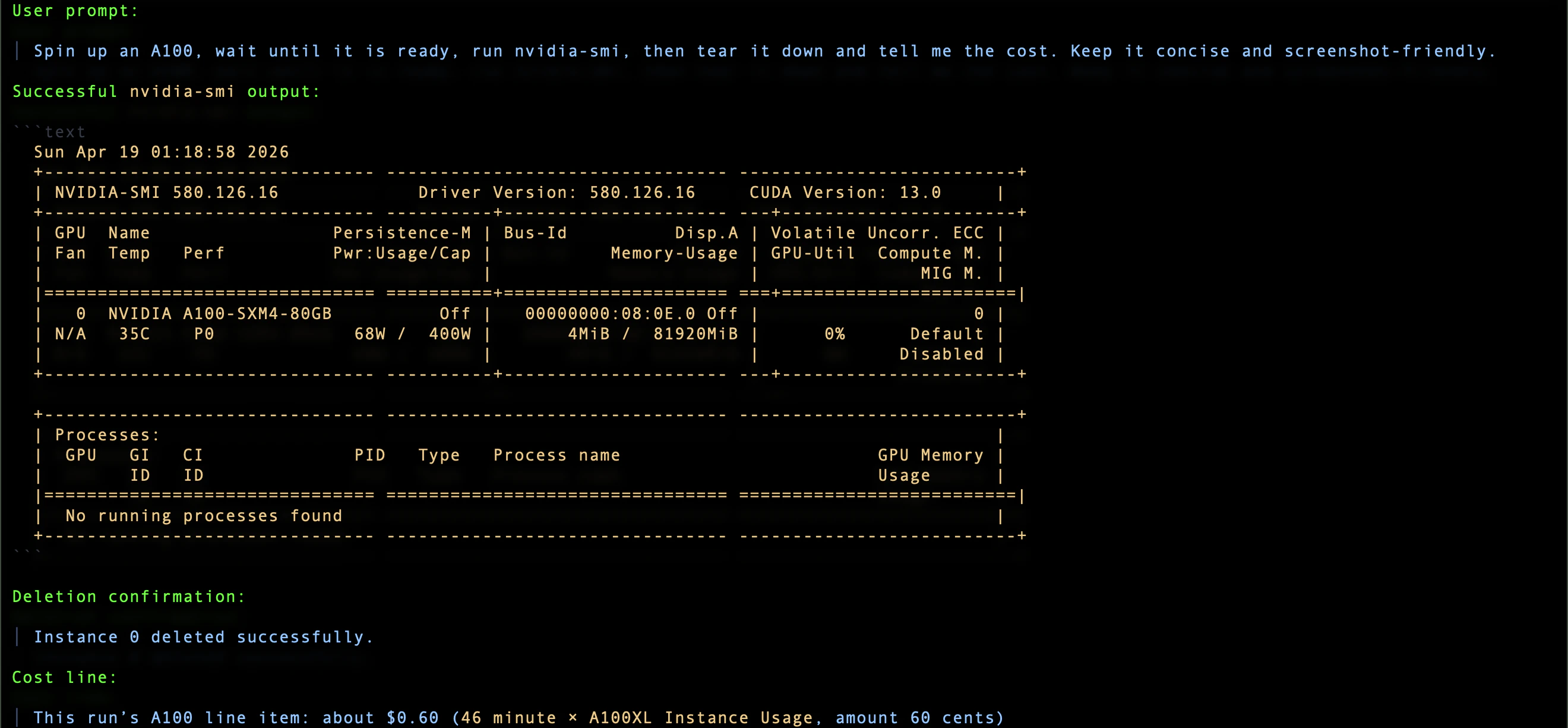

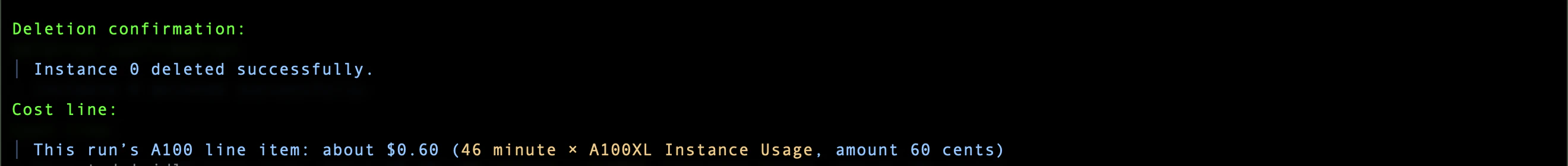

Run Your First GPU Smoke Test

Use a short lifecycle test before doing real work:- Create a Thunder Compute A100 instance.

- Wait until it is command-ready.

- Run

nvidia-smi. - Delete the instance.

- Report the invoice line or approximate cost.

nvidia-smi output should show an NVIDIA GPU, driver version, CUDA version, and exit code 0.

Thunder Compute instances may take a minute to become ready. This is expected; the agent should wait until the instance is running before retrying the command.

Everyday Usage Prompts

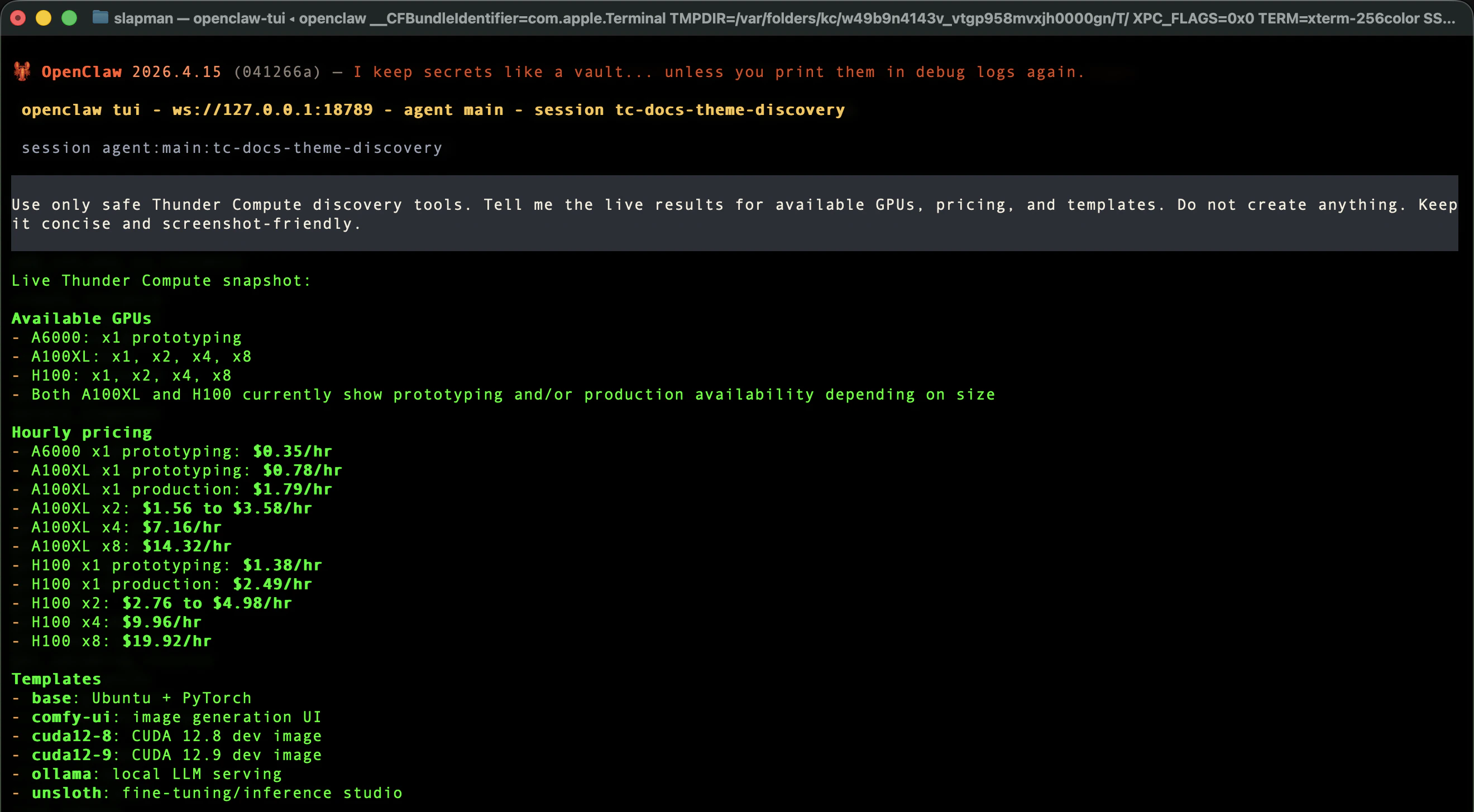

Check availability and pricing:base, ollama, and comfy-ui as common templates, and template services may require a start command such as start-ollama or start-comfyui.

Create a snapshot only when you want to preserve state:

How The Agent Should Behave

The Thunder Compute skill instructs the agent to:- use live discovery when the request is open-ended

- create the instance the user asked for when the request is specific

- wait through

QUEUEDorSTARTINGuntil the instance is ready - run the requested command

- show stdout, stderr, and exit code when relevant

- create snapshots only when asked

- delete instances by default

- report cost in the same response as teardown

Known Beta Caveats

Skill And Plugin Are Both Required

The skill alone does not create GPU instances. It only teaches behavior. Real Thunder Compute operations happen through thethunder-compute plugin tools.

New Instances May Not Be Command-Ready Immediately

Aftertc_create_instance succeeds, an instance may briefly report QUEUED or STARTING. If tc_run_command says the instance is not running yet, ask the agent to poll tc_list_instances until the status is RUNNING, then retry the command.

GPU Availability Changes

Availability is live. An H100 or A100 shape that was available earlier may become unavailable later. If creation fails because of availability, ask the agent to run discovery and show the current options.Template Services Need Runtime Verification

Some templates can provision successfully while a user-facing service still needs verification or manual startup. For example, a Jupyter-backed template may require retrieving a token from inside the instance:Related Thunder Compute Docs

These Thunder Compute docs explain the underlying concepts used by this OpenClaw beta:- Creating Instances

- Instance Templates

- Technical Specifications

- GPT-OSS 120B on Thunder Compute

- Docs MCP Server

Troubleshooting

Thunder Compute tools do not appear

Check:- the plugin is installed with

openclaw plugins inspect thunder-compute - the plugin is enabled

tools.alsoAllowincludes"thunder-compute"- the gateway was restarted after configuration

Authentication Fails Or Expires

First check auth status:Instance creation fails

Ask for live options:The command says the instance is still starting

Ask:You are unsure whether anything is still billing

Ask:Summary

Thunder Compute for OpenClaw beta gives your agent a real GPU lifecycle:- discover live GPU options

- authenticate with Thunder Compute

- create a GPU instance

- run commands

- tear down by default

- report cost